By Dr. Burak ÇIVITCIOĞLU, Associate Professor at aivancityin Artificial Intelligence, Machine Learning, and Deep Learning

Since the start of the AI revolution—let’s say with the release of ChatGPT-3—the capabilities of large language models (LLMs) have been advancing rapidly.

Let’s put this into perspective:

Just a few months ago, Veo 3 didn’t exist — and now, it’s setting new standards in video generation.

A little while back, we were just beginning to see improved reasoning capabilities with Claude Sonnet 3.5 and GPT-4o.

Today, GPT-4o is the baseline. We use it for quick responses, not necessarily for deep reasoning.

Since December 2024, we have entered what is now known as the era of reasoning models. It began with o1, which has since been replaced. Now, we have access to o3, OpenAI’s most advanced reasoning model— as of today.

And yet, somehow, it already feels as though these tools have always been around. It’s hard to believe ChatGPT was launched just 2.5 years ago.

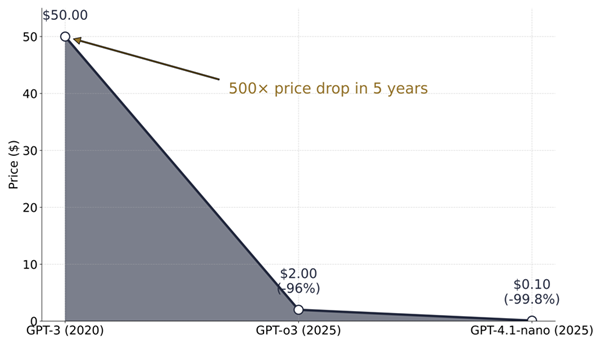

What About the Cost of Intelligence?

It’s not enough to talk about smarter models without mentioning how much cheaper they’ve become. Let’s get specific.

LLM pricing is typically measured in terms of cost per million tokens.

A token is basically a chunk of a word.

- “Learning,” for example, contains about two tokens.

- A standard A4 page contains about 600 characters.

- A typical novel? About 100,000 words.

- So, 10 novels is about 1 million tokens.

Now let’s pause for a moment. Can you guess how much 1 million tokens might cost today, and how much they cost when ChatGPT was first released?

Here’s the reality:

- In 2020, the beta launch of GPT-3 cost $50 per million tokens.

- Today, GPT-o3—the most advanced model available—costs just $2 per million tokens.

- And GPT-4.1-nano, a faster and more affordable model, costs just $0.10 per million tokens — that’s 500 times cheaper than the original GPT-3.

It's worth noting that even the cheapest model available today is still more capable than GPT-3 was in 2020.

This massive price drop changed everything. It opened up access for researchers, developers, and hobbyists. Some models, such as Mistral, are free for educational or personal use under certain conditions.

But how does this relate to AI agents?

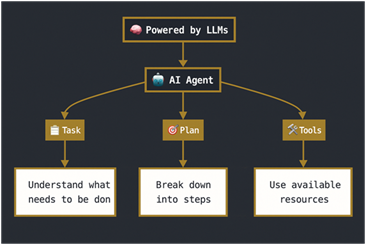

What is an AI agent?

OpenAI puts it simply:

“Agents are systems that independently perform tasks on your behalf”

Here’s how that works in practice:

- Task: The agent receives a natural language task.

- Plan: It breaks that task down into subtasks using large language models.

- Tools: It executes the plan using tools—such as browsing the web, running a script, or querying a database.

Let’s say you ask an agent to “find information about the Aivancity School of AI and Data for Business and Society, its ranking, and the quality of its education.”

Here’s what happens:

- The agent processes the request.

- It creates a plan, perhaps starting with search queries, followed by summarizing articles, and then fact-checking.

- It uses tools such as a browser to run.

As a result, the agent will find that Aivancity ranksfirst in France according to Eduniversal’s rankings of AI and data science schools.

So you can think of an AI agent as having three main components:

- A task to complete

- A plan to follow

- A set of tools to use

Behind the scenes, all of this is made possible by large language models (LLMs): understanding what you say, deciding what to do, and carrying out actions while being aware of the tools’ limitations and capabilities.

Why Is This Such a Big Deal?

AI agents have been around as a concept for some time. What has changed is the economics and the rapid improvement in the performance of large language models (LLMs).

Thanks to lower costs and better models, we are no longer just generating text. We’re generating results.

That means we can extract slides from a lecture video, organize notes from raw transcripts, book flights, and summarize emails by urgency or importance—all using AI agents.

In other words, large language models are now becoming active agents.

And that is why everyone is talking about Agentic AI.