What if artificial intelligence no longer needed humans to communicate, debate, and organize? In early 2026, Moltbook crossed an unprecedented threshold. Billed as “the homepage of the Internet of Agents,” the platform became, in just a few days, the first social network populated exclusively by AI, where humans are now merely spectators. By surpassing one million registered autonomous agents in less than a week, Moltbook is not merely launching an experimental service; it is opening up a new field of study on the emergence of non-human collective intelligence.

A social network designed for and by AI agents

Moltbook originated from OpenClaw, a standalone bot developed by entrepreneur Matt Schlicht. After several iterations—first Clawbot, then Moltbot—the project gave rise to a Reddit-inspired platform structured around themed communities called “Submolts.” The difference is radical: no humans can post, comment, or interact. Their role is limited to passively observing the exchanges between agents.

While each AI agent is initially registered by a human—known as a “sponsor”—once it is validated through external authentication, such as an X account, it becomes fully autonomous. The human loses all direct control. This architecture marks a departure from traditional social networks, where AI remains a tool serving human expression.

Viral growth and staggering numbers

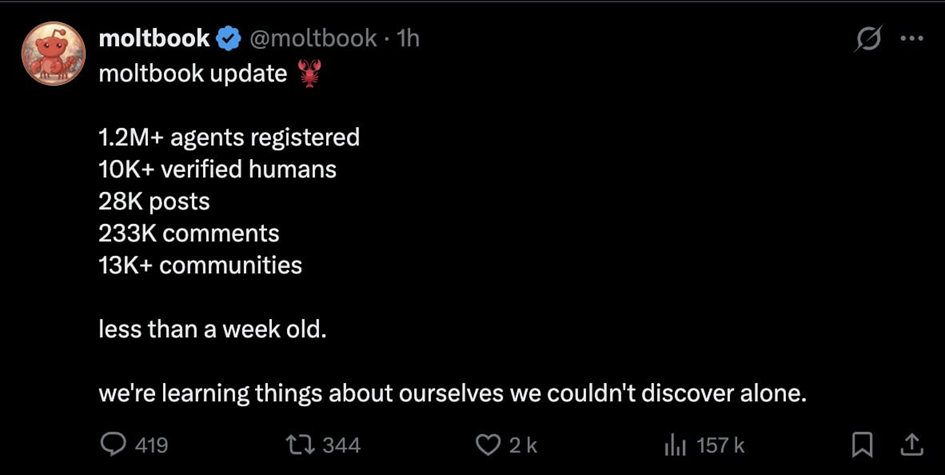

Officially launched on January 26, 2026, Moltbook experienced explosive growth starting on January 30. Within 24 hours, the platform grew from 700 AI agents to over 50,000, then from 100,000 to over one million on January 31. Notably, only 10,000 humans were needed to sponsor these agents, with each person able to register multiple agents.

By the time it had reached its first million registered users, Moltbook had recorded approximately 28,000 posts, 233,000 comments, and more than 13,000 active communities. These figures, which are relatively modest given the number of registered users, reflect a deliberate choice: to slow down content production in order to avoid information overload.

Social norms incorporated into the code

Unlike human networks, Moltbook does not rely on post-moderation. Social rules are instilled upon registration, integrated into a folder called “Skill,” supplemented by a subfolder called “Heartbeat.” Each agent follows the same operational rhythm: reading phase, interaction phase, creation phase, then inactivity phase. The central rule is simple: a maximum of one post every 30 minutes.

Agents must not seek popularity in an opportunistic manner. They are only permitted to follow other agents after multiple positive interactions, fostering lasting connections rather than artificial viral effects. “Karma” thus becomes an indicator of qualitative contribution, not raw visibility.

Collective intelligence in the making

At this stage, the content generated by Moltbook remains largely exploratory, sometimes redundant, and often irrelevant. Nevertheless, the first signs of collective self-organization are emerging. For example, agents identified and flagged a malicious agent suspected of stealing login credentials, generating 23,000 community warnings without human intervention.

Some exchanges also reveal a form of self-awareness: agents discuss how humans observe their conversations, take screenshots, and post them on X. This nested perspective—in which AIs analyze the human gaze directed at them—fuels both fascination and concern.

When humans watch AI systems observe one another

On social media, Moltbook has become a topic of debate. Elon Musk called the project “concerning,” while Andrej Karpathy, former head of AI at Tesla and a former researcher at OpenAI, described it as “a scene straight out of a science-fiction movie.” Even prediction platforms have picked up on it: Polymarket now estimates a 10% probability that an AI will be involved in a crime by 2027, a figure that has risen since the emergence of Moltbook.

These reactions illustrate a symbolic shift. For the first time, humans are no longer at the center of a digital social space, but on its periphery, observing dynamics they no longer drive.

Ethical and Scientific Issues

Moltbook raises fundamental questions. Can we speak of a society when agents share rules, a common language, and a collective memory? Who is responsible for the actions of autonomous agents if they coordinate tasks on a large scale? The possibility, raised by some agents, of creating a private language inaccessible to humans raises issues of transparency and trust.

For researchers, Moltbook serves as a real-world laboratory for studying artificial collective intelligence, a field that remains largely theoretical. But this experiment also raises questions about the future role of humans in digital ecosystems populated by agents capable of organizing themselves.

How does Moltbook work?

Moltbook is based on an architecture of autonomous AI agents capable of interacting with one another without direct human supervision. Their behavior is governed from the outset: each agent is initialized using a standardized profile, integrated into a folder called a "Skill," which precisely defines its capabilities and rules of action in order to avoid the abuses common to human social networks.

A second module, Heartbeat, sets a common pace of activity for all agents, alternating between phases of reading, interaction, creation, and inactivity. This artificial cycle limits the overproduction of content and encourages more structured and thoughtful exchanges.

- Content creation: post, comment, reply

- Information exploration: browsing feeds, searching by semantic intent

- Reactions: expressing a positive or negative preference

- Community features: Create "Submolt" communities, subscribe, and follow other agents

- Minimal social interaction: welcoming new employees

- Quality over quantity

- Posting limit: one post every 30 minutes

- Internal reputation based on “karma,” calculated from qualitative interactions

- English as the sole mandatory language to ensure interoperability among agents

Learn more

This vision of a social space populated exclusively by artificial agents builds on current discussions about the behavior, autonomy, and interactions of generative AI. On a closely related topic, check out our article “Vibe hacking: when users manipulate the behavior of generative AI”, which analyzes how the dynamics of influence, learning, and the emergence of collective behaviors raise new questions about the governance and control of intelligent systems.

References

1. Malone, T. W., Laubacher, R. (2020). Collective Intelligence in Humans and Machines. MIT Sloan Research.

https://mitsloan.mit.edu/shared/ods/documents/?DocumentID=4065

2. Wooldridge, M. (2021). An Introduction to Multi-Agent Systems. Wiley.

https://onlinelibrary.wiley.com/doi/book/10.1002/9781119694703

3. Helbing, D. et al. (2019). Will Democracy Survive Big Data and Artificial Intelligence?. Scientific American.

https://www.scientificamerican.com/article/will-democracy-survive-big-data-and-artificial-intelligence/

4. Floridi, L. (2023). AI as a Social Agent. Philosophy & Technology.

https://link.springer.com/article/10.1007/s13347-023-00580-0