The generation of text, images, or code using artificial intelligence has now become widely accessible. In 2025, a new milestone was reached with the integration of Lyria 3 into Gemini, the multimodal model developed by Google. This advancement now makes it possible to generate musical pieces based on textual or visual instructions, directly from the Gemini interface. The initial rollout is taking place on computers, with a gradual expansion to other environments. The goal is clear: to extend AI’s multimodal capabilities to music composition, radically simplifying access to sound creation.

This development comes at a time when the global market for AI-generated music is growing rapidly. According to a 2023 report by MarketsandMarkets, the AI sector in the creative industries could exceed $10 billion by 20301. Music is among the most dynamic segments, driven by the growing demand for personalized audio content for digital platforms.

Lyria 3: A Model for Controlled Music Generation

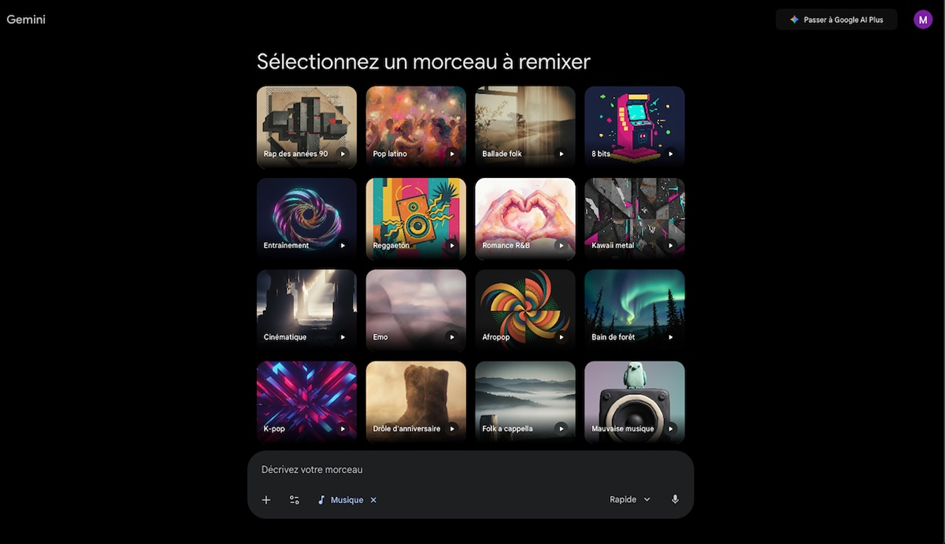

Lyria 3 is designed to generate compositions lasting about thirty seconds based on a simple prompt describing a mood, genre, rhythm, or specific situation. The user selects the music creation option in Gemini, enters their request, and the system generates a complete track that includes instrumentation, rhythmic structure, and sometimes synthetic vocals.

Technically, this capability is based on generative model architectures trained on vast musical corpora. As with text-to-image models, the system learns stylistic, harmonic, and structural patterns in order to generate coherent audio sequences. The multimodal approach also allows for the use of an image as an input, with the AI translating visual characteristics into a corresponding soundscape.

Early user feedback highlights the system’s speed and ease of use. A single text prompt is all it takes to trigger the generation process. However, limitations remain, particularly when dealing with complex prompts that combine multiple styles or specific rhythmic constraints. This maturation phase is typical in the improvement cycle of generative models.

Practical applications for creators and platforms

The integration of Lyria 3 into Gemini opens up several practical use cases.

- Creation of jingles and short music clips for online videos

- Generation of custom soundtracks for short-form content

- Rapid music prototyping for creative projects

- Educational Experimentation with AI-Assisted Composition

- Production of custom audio content for digital applications

Lyria 3 is also connected to the YouTube ecosystem via Dream Track, allowing creators to generate music tailored to the theme of their short videos. This integration between generative AI and the distribution platform strengthens the strategic coherence of the digital ecosystem.

According to the IFPI, more than 120,000 new tracks are released every day on streaming platforms in 20242. In this context of hyperproduction, the ability to quickly generate personalized audio content is becoming a strategic asset for independent creators and digital content companies.

Enhanced creativity rather than studio production

It is important to note that the generated tracks are not intended to compete with studio-quality music productions. The goal is rather to offer a personalized soundscape tailored for everyday use or short-form content. The added value lies in immediacy and personalization, not in artistic sophistication comparable to that of a professional composer.

This approach reflects a broader trend in generative AI: the tool is becoming a catalyst for creative expression rather than a direct substitute for professionals. A 2023 MIT study shows that creative AI tools increase the productivity of non-expert users by 37%, while maintaining a central role for human expertise in complex projects3.

Copyright, Traceability, and Liability

The creation of music immediately raises legal and ethical questions. Models are trained on large music datasets, which raises questions about inspiration, the reproduction of styles, and the protection of rights holders.

To address these challenges, Google is incorporating a digital watermark called SynthID into Lyria 3, a technology already used for AI-generated images. This imperceptible mark makes it possible to identify the artificial origin of audio content. Users can also analyze a file to check for the presence of this mark.

The issue of traceability is central. The European Parliament, as part of the AI Act adopted in 2024, emphasizes the need to clearly identify content generated by artificial intelligence in order to maintain transparency and user trust4. The inclusion of a watermark is a technical solution, but its effectiveness will depend on its resilience to modifications or subsequent processing of the audio files.

Another issue concerns the interpretation of prompts that mention well-known artists. The models are designed to avoid direct imitation and instead focus on general stylistic inspiration. Filtering and reporting mechanisms are in place to limit potential abuse.

Toward Fully Creative AI

The integration of Lyria 3 reflects a key trend: the convergence of content formats within a single platform. Text, images, video, and now music are generated within a unified environment. This integration enhances the coherence of digital ecosystems and simplifies the user experience.

In the medium term, this trend could transform the way multimedia content is created. A creator could simultaneously generate a script, visuals, and a soundtrack within a single AI-assisted workflow. According to PwC, creative automation could contribute to a significant increase in productivity in the cultural industries by 20305.

One key question remains: if composing becomes as accessible as writing a text or generating an image, how do we redefine the concepts of authorship and artistic value in an environment where creation is instantaneous and largely automated?

Toward a structural fusion between composers and algorithms

Lyria 3 in Gemini marks a new milestone in the expansion of artificial intelligence’s multimodal capabilities. On-demand music generation simplifies access to sound creation, while raising major legal and ethical issues. The tool is designed to augment creativity rather than replace it. The question now is how to strike a balance between technological democratization and the recognition of human value in the act of composition.

In previous posts on this blog, we examined the rise of multimodal models in “ChatGPT Steps Up a Gear: OpenAI Unveils GPT-5,” as well as the impact of generative technologies on the creative industries in “Meta x Midjourney: A Strategic Alliance to Revolutionize AI-Powered Images and Video.” These developments show that music is just another step in the broader integration of AI into the heart of creative processes.

How does Lyria 3 work?

Lyria 3 is based on a generative audio model architecture trained on large annotated musical corpora. The system uses deep neural networks capable of modeling the harmonic, rhythmic, and spectral structures of a song to generate a coherent audio sequence based on a textual or visual prompt.

Integration into Gemini is based on a multimodal approach: the text prompt or image provided is first encoded into a shared latent space. This semantic vector is then transmitted to the audio module, which generates a spectrogram representation before converting it into a usable audio signal.

- Audio diffusion models or autoregressive architectures adapted to time-series signals

- Multimodal text-to-image encoding into a unified latent space

- Generation of spectrograms followed by reconstruction of the audio signal

- Optimization via semi-supervised learning on large music corpora

- Embedding a digital watermark (SynthID) directly into the signal

- Limited track length (approximately 30 seconds) to manage computational complexity

- Filtering requests that mention specific artists to prevent direct imitation

- An imperceptible watermark embedded in the audio signal to ensure traceability

- Optimized latency for near-instantaneous generation in a cloud environment

- Probabilistic Check for Harmonic and Rhythmic Consistency

Learn more

The integration of Lyria 3 into Gemini demonstrates the expanding creative capabilities of multimodal models, which can generate text, music, and images alike. On a related topic, check out our article “Nano Banana 2, Google’s Future AI That Blurs the Line Between Generated Images and Real Photos”, which analyzes how advances in visual generation are helping to redefine the standards of realism and AI-assisted creation.

References

1. MarketsandMarkets. (2023). AI in Media & Entertainment Market Forecast.

https://www.marketsandmarkets.com

2. IFPI. (2024). Global Music Report 2024.

https://www.ifpi.org

3. Brynjolfsson, E., Li, D., Raymond, L. (2023). Generative AI at Work. MIT Sloan Research.

https://mitsloan.mit.edu

4. European Parliament. (2024). Artificial Intelligence Act.

https://www.europarl.europa.eu

5. PwC. (2023). Sizing the Prize: AI in the Creative Industries.

https://www.pwc.com