By Nasreddine Menacer | Professor and Head of the AI Clinic and Online Programs at aivancity

1- AI: Much More Than Just Text and Image Generators

Artificial intelligence has recently taken the media by storm with tools capable of writing stories, generating stunning images, or composing music. Names like ChatGPT, DALL·E, and Midjourney have become familiar. They inspire a mix of fascination, concern, and admiration. Yet, to believe that AI is limited to these spectacular feats is like judging an iceberg by its visible tip alone.

AI has been shaping our daily lives for much longer than we realize, often in subtle yet decisive ways. It optimizes delivery routes, forecasts the weather, secures banking transactions, and helps diagnose diseases. These are less visible forms of AI, but they are just as powerful—and sometimes even more useful.

As a robotics engineer who went on to become a data scientist and a faculty researcher, I’ve seen AI evolve into the boom we’re experiencing today. I myself used simple neural networks long before “text generators” were even mentioned in the media. And I can assure you: artificial intelligence is a forest far vaster than the single tree of generative AI.

My goal in writing this article is to encourage you to take a step back, broaden your perspective, and (re)discover this fascinating discipline in all its diversity. Because to use it wisely and responsibly, you first need to understand what it is—and what it isn’t.

2- A long-standing history

Artificial intelligence isn’t a new phenomenon, and ChatGPT certainly isn’t where it all began. It is part of a rich history marked by cycles of enthusiasm, disappointment… and spectacular comebacks.

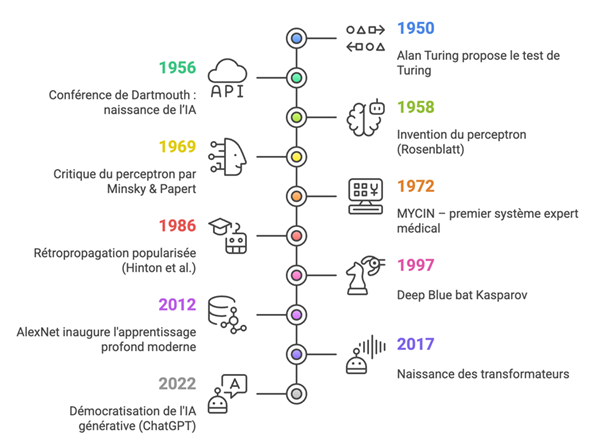

It all began in 1950, when British mathematician Alan Turing published his seminal paper“Computing Machinery and Intelligence.” In it, he introduced the famous Turing test, designed to determine whether a machine can “think”—or at least convincingly simulate a human conversation.

In 1956, at the founding conference of Dartmouth College, pioneers such as John McCarthy (who coined the term “Artificial Intelligence”), Marvin Minsky, Claude Shannon, and Nathan Rochester asserted that intelligence could be described with such precision that a machine could be built to simulate it.

The following decades saw the emergence of expert systems, capable of reasoning based on explicit rules. For example, MYCIN (1972), developed at Stanford, assisted doctors in diagnosing infectious diseases. These AI systems relied on knowledge bases and inference engines. Effective, but not very flexible. Their rigidity led to the first “AI winter” in the 1980s, a period of disillusionment marked by declining funding.

In 1997, IBM’s computer Deep Blue defeated world chess champion Garry Kasparov. This symbolic moment marked the return of AI to the collective imagination, even though the victory relied more on brute force (combinatorial and heuristic search) than on any form of “intelligence.”

The Rise of Neural Networks: From Perceptrons to Transformers

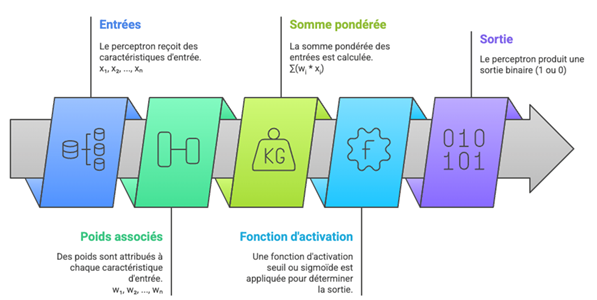

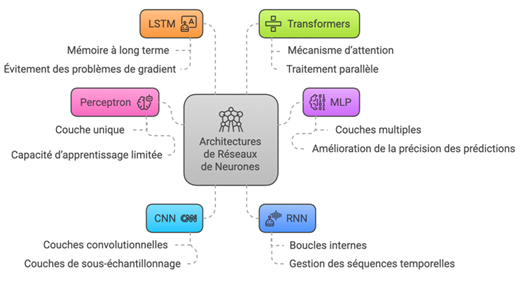

The history of artificial neural networks begins much earlier, in 1958, with Frank Rosenblatt’s invention of the perceptron. This model, simple yet revolutionary at the time, was inspired by the functioning of biological neurons: it takes digital inputs, assigns weights to them, calculates a weighted sum, and applies an activation function to produce an output.

But its limitations soon became apparent. In 1969, Minsky and Papert demonstrated that perceptrons could not solve simple problems such as the XOR function. Neural networks fell out of favor for several decades.

It was in the 1980s that multilayer networks (MLNs) made a comeback, thanks to the backpropagation algorithm, formalized by Rumelhart, Hinton, and Williams (1986). This method makes it possible to effectively train networks with multiple hidden layers, capable of learning more complex representations.

My personal experience: the transition phase

In 2013, I was a robotics engineer, and I was using these simple perceptrons in practical applications such as edge detection in medical images. Although limited, these models were already useful in applied engineering. I built them in MATLAB/Simulink, manually modeling the connections and activation functions.

It was a pivotal moment: simple neural networks were still widely used in industry, but academic research was beginning to shift toward deep architectures, made possible by advances in computing (GPUs), the abundance of data, and above all… a breakthrough that would change everything.

2012: The AlexNet Breakthrough and the Golden Age of Deep Learning

The real breakthrough in deep learning came in 2012, when the AlexNet model, developed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton, dominated the ImageNet competition. This convolutional neural network (CNN) far outperformed traditional approaches to image recognition. Since then, a series of architectures has enabled the application of AI to numerous fields:

Key foundational publications to note

- Rosenblatt (1958) – The Perceptron

- Rumelhart, Hinton & Williams (1986) – Learning representations by back-propagating errors

- Krizhevsky et al. (2012) – ImageNet Classification with Deep CNNs

- Hochreiter & Schmidhuber (1997) – Long Short-Term Memory

- Vaswani et al. (2017) – Attention Is All You Need

3- Generative AI: A Star That Hides the Forest

Since 2022, artificial intelligence has become a fixture in the media landscape, driven by one of its most spectacular branches: generative AI.

With tools like ChatGPT, DALL·E, Midjourney, and OpenAI’s Sora, this technology is capable of writing stories, painting pictures, composing music, animating characters, synthesizing voices, and more.

Its rise to prominence has radically changed perceptions of AI: long viewed as a technology for optimization or automation, it is now seen as creative, and sometimes even magical. Viral demonstrations, striking deepfakes, and instant summaries fuel this fascination. Generative AI shines like a media star—captivating, impressive… and sometimes unsettling. But this dazzling light must not make us forget what it barely illuminates: the vast “forest” of other forms of artificial intelligence, less visible but just as fundamental to our daily lives.

The Forest of Classical AI: Discreet but Omnipresent

Behind the spotlight lies a dense forest of non-generative AI applications—often invisible to the general public but absolutely central to our lives. These AI systems do not generate original content, but they predict, classify, optimize, detect, recommend, correct, segment, and anticipate… And they’ve been doing so for years. Here are some concrete examples and the fields in which they are used:

Recommendation systems

These algorithms are ubiquitous in e-commerce and streaming platforms. For example, on Amazon, they analyze your past purchases and search history to suggest relevant products. On Spotify, they analyze your listening habits to recommend personalized playlists. These AI systems rely on techniques such as collaborative filtering and pattern analysis to predict your preferences.

Fraud detection

In the banking sector, algorithms monitor your transactions to detect suspicious activity. If you use your card abroad or make an unusually large purchase, AI can flag a potential fraud. These systems are essential for securing online payments and protecting users in the financial services sector.

Weather forecast

Traditional AI models analyze large amounts of data (temperature, humidity, wind) to forecast the weather. Used by meteorological services such as Météo-France, they help farmers plan their harvests, cities prepare for storms, and airlines adjust their flight schedules.

Logistics Optimization

In the warehouses of giants like Amazon, AI systems calculate the most efficient routes for the robots that move packages. They reduce delivery times by optimizing every step of the supply chain, a key challenge for global trade.

Non-generative natural language processing (NLP)

Apart from generative models, there are many non-generative NLP systems that are nonetheless very useful:

- Sentiment analysis to gauge customer sentiment on social media

- Named Entity Recognition (NER) to identify names, dates, and places in a text to be anonymized.

- Document classification (emails, complaints, contracts, etc.)

- Spam detection, grammar correction, machine translation (e.g., Google Translate historically relied on statistical approaches before neural networks)

Anomaly detection

Widely used in cybersecurity, predictive maintenance, and healthcare:

- Identify abnormal network behavior

- Detecting weak signals in an MRI scan

- Detecting an industrial machine that is beginning to deteriorate Often based on models such as autoencoders, decision forests, or SVMs, this “invisible” AI focuses more on prevention than on taking action.

Search engines

Even before generative AI, supervised learning algorithms were ranking search results, autocompleting your queries, or filtering content. Google, Bing, and Qwant were already using machine learning to personalize and contextualize information—without generating a single sentence.

What these AIs have in common:

These artificial intelligence systems, often referred to as “traditional,” do not seek to impress. They do not shine in the spotlight, do not create in the artistic sense of the word, and do not generate spectacular images or texts. Their focus lies elsewhere: in reliability, precision, and efficiency.

Found in finance, healthcare, manufacturing, logistics, cybersecurity, meteorology, and retail, they solve real-world problems—often complex ones—and work quietly behind the scenes. Without them, no transportation system, digital security system, or critical infrastructure could function properly.

Given the enthusiasm surrounding generative AI, it is essential to remember that it represents only a small part of the vast ecosystem of artificial intelligence. For behind every visible feat lies a forest of algorithms that are often overlooked but indispensable.

Generative AI ≠ General AI

Another important point: many people confuse generative AI with general AI—AI capable of reasoning, understanding, and adapting like a human. That is not the case today. Generative models are statistical and unconscious; they have no real understanding of the world. They produce plausible results, not true ones.

As AI researcher Gary Marcus puts it: “These systems are impressive… but they don’t think. They’re stochastic parrots.”

4- AI is everywhere: open your eyes!

Artificial intelligence isn’t just about writing poems or generating images. It’s already woven into our daily lives, often so seamlessly that we don’t even notice it anymore. And yet, it’s all around us, sometimes at the heart of crucial decisions.

Health – Cancer Screening

In hospitals, algorithms analyze radiological images such as mammograms and CT scans to detect abnormalities. These systems are trained on thousands of images annotated by doctors, learning to distinguish normal structures from suspicious ones (masses, calcifications, irregular shapes, etc.).

They do not replace doctors, but serve as a second set of eyes, speeding up diagnoses and reducing error rates. Solutions such as Google Health, IBM Watson Health, and Aidoc are already in use in several countries.

Transportation – Self-driving cars

Vehicles like those from Tesla, Waymo, and Cruise use a suite of sensors (cameras, lidar, radar) to perceive their surroundings. Computer vision algorithms detect pedestrians, traffic lights, and road signs, while other models make real-time decisions: accelerating, slowing down, or changing lanes.

The system relies on a combination of CNNs (for visual analysis), predictive models (to anticipate behavior), and control algorithms for action. This is an extremely complex use case, but it illustrates just how deeply AI can be integrated into a critical decision-making system.

Other AI tools you use without realizing it:

- Predictive keyboards on smartphones: they use sequence models (such as RNNs) to predict the next word.

- Spam filters in email: use Bayesian models or neural networks to classify messages.

- Voice assistants: Alexa, Siri, and Google Assistant combine speech recognition, NLP, and knowledge bases.

- Machine translation: based on transformer models, such as Google Translate or DeepL.

Today, I am a data scientist and a faculty researcher at aivancity. At the AI Clinic, I mentor students who are working on real-world projects in collaboration with companies.

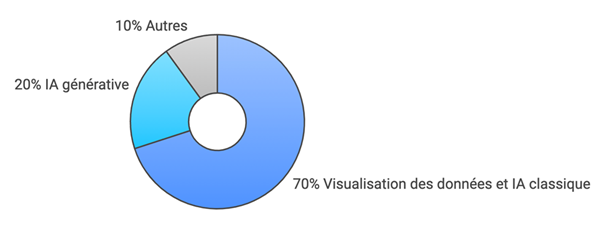

What stands out is that 70% of the projects involve traditional AI or data visualization: anomaly detection, sales forecasting, resource optimization…

Very few of them involve generative AI. And this reflects an important reality: in businesses, the vast majority of practical applications of AI do not involve content generation, but rather data analysis, prediction, and optimization.

AI isn’t some futuristic gadget: it’s already here—in our phones, in our hospitals, in our banks, in our cars. You can’t see it, but it’s at work. And to understand it, we need to open our eyes and look far beyond robots that write poems.

5- Let’s not let generative AI steal the spotlight

Generative AI is captivating. It draws, writes, composes, and even codes… It has everything it takes to fascinate engineers, amaze the curious, and worry experts. It has transformed the image of artificial intelligence in just a few months. But this fascination, while legitimate, risks blinding us.

We must not allow this highly publicized “star” to overshadow the critical issues related to other areas of AI—areas that may be less spectacular but are just as, if not more, crucial to our societies.

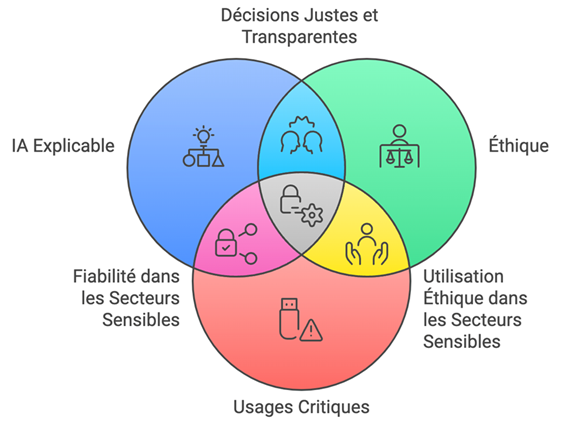

Explainable AI (XAI)

Today, many AI systems make decisions in critical situations: approving a bank loan, detecting cancer, recommending an action to an autopilot system… But how can we justify a decision made by a deep learning model? How can we explain why a particular profile is deemed risky, or why a specific anomaly is flagged?

Explainable AI (XAI) is a rapidly growing field. It aims to make models more understandable, more transparent, and, above all, interpretable by humans—even those without a technical background. Methods such as SHAP, LIME, and heatmaps make it possible to visualize the influence of each variable or pixel on a decision.

AI Ethics

The development of AI also raises fundamental questions:

- Who owns the data used to train these models?

- How can we avoid discriminatory bias in algorithmic decisions?

- What should we do about AI systems that “hallucinate” facts or spread stereotypes?

- How can we ensure that AI systems are fair, inclusive, and accountable?

Frameworks such as the OECD’s proposed ethical principles for AI or the European AI Act aim to regulate the use of these technologies.

But there remains a gap between regulatory intentions and actual practices. This is where practitioners, researchers, educators, and citizens have a crucial role to play.

Critical applications and robustness

In critical sectors (healthcare, energy, finance, transportation, etc.), AI must be reliable, robust, and thoroughly tested. It is not enough for a model to perform well “on average”; it must perform well in extreme cases, under real-world conditions, and sometimes even in real time.

For example:

- Medical AI must be certified and clinically tested

- Predictive maintenance AI must never miss a weak signal

- Algorithmic trading AI must react in milliseconds without disrupting the market

These critical applications require precision, traceability, and technical expertise—a far cry from the creative experimentation of generative models.

In the classroom, at work, in society…

As a professor and researcher, I see that these issues are of great interest to students. At the AI Clinic, several projects focus specifically on:

- Interpreting models

- Visualizing the Decision

- Detecting biases in datasets

- Assessing the robustness of a system

And it is often these projects—which may seem less “impressive” at first glance—that are the most meaningful for partner companies.

Generative AI is in the spotlight, but it shouldn’t overshadow other priorities. If we want artificial intelligence to be truly useful, equitable, and sustainable, then we also need to address what happens behind the scenes: the traceability of decisions, the ethics of models, and the robustness of critical systems.

Because the real revolution will not come about solely through generated images or written text, but through the trust we place in the systems we build.

6. A Call for a Broader Perspective

Artificial intelligence is a remarkable human, scientific, and technological endeavor. It has the potential to transform medicine, revolutionize industry, improve education, optimize resource use, and help combat climate change… But only if we view it in all its richness, complexity, and responsibility.

Reducing AI to its most visible tools risks overlooking its most impactful applications. It overlooks the fact that, before generating images or writing text, AI observes, predicts, corrects, optimizes, secures, and helps us understand. It is everywhere, in subtle yet decisive ways.

Raising a generation that is aware and capable

As an instructor at the AI clinic at Aivancity, I see every day how students, once confronted with real-world business challenges, realize that AI is not limited to technological innovation: it is also a culture of decision-making, analysis, and accountability.

Whether it’s building a robust predictive model, identifying biases in a dataset, or clearly explaining an algorithmic recommendation to a non-technical client, AI requires more than just technical skills: it demands sound judgment, ethical considerations, and the ability to communicate effectively.

And where do you stand in this revolution?

Whether you’re a curious observer, a user, a developer, a philosopher, a manager, a journalist, a researcher… you have a role to play.

- Look beyond the trends.

- Ask questions about the models you use.

- Ask for explainability.

- Reject “black-box” AI systems that are imposed without careful consideration.

- Promote simple, practical, and transparent practices.

Responsible AI is AI that is understood

If we want artificial intelligence to be a tool that serves humanity, then we must understand it in its entirety—its history, its applications, its limitations, and the challenges it presents. Our fascination must not overshadow our understanding.

AI is neither good nor bad in and of itself: it is what we make of it.

Closing Remarks

“AI is in our hands. But we still need to approach it with our eyes wide open.”